Availability of the component: Reference contact is Jerome Urbain of Universite' de Mons.

Notes: ACAPELA S.A is the reference licensor of the component supporting also commercial deployments.

Besides functional tests, extensive experimentation in CALLAS is done in Scientific Showcases

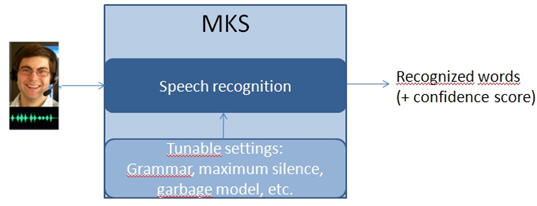

- the e-Tree : in this Augmented Reality art installation the component recognises pre- defined sequences of words and confidence rates.

- the CommonTouch (collective empathic navigation of slogans on a public multitouch screen): the component is used for slogan’s vocabulary said out loud as well as affective vocabulary in the users’ speech

- the Musickiosk and Interactive Opera use the component for selection of characters, activation and restarting, with emotional keywords weighted and combined with other input modalities into P-A-D values for emotion estimation.

Using Affective Trajectories to Describe States of Flow in Interactive Art: Abstract

PAD-based Multimodal Affective Fusion: Abstract

E-Tree: Emotionally Driven Augmented Reality Art: Abstract

An Emotionally Responsive AR Art Installation: Abstract

Developing Affective Intelligence For An Interactive Installation:Insights From A Design Process: Abstract