A specific CALLAS Proof-of-Concept targeted affective edutainment applied to an entertainment experience for young audiences, featuring an interactive, assisted classic music composition system. Referred to as MusicKiosk, this is a museum installation offering children and adults the experience of building musical stories based on their emotional expressions. The goal is to prototype a publicly accessible application for users of all ages exploring how emotional reactions can be converted into a musical performance. See poster.

The full "Concerto Storico" story is supporting the Proof-of-Concept, based on Paola Pacetti's (Director of the Children's Museum of Palazzo Vecchio, Firenze, Italy) concept. The system experiments natural interaction and the capacity to create emotional feedback loops to engage the young through interaction and reaction. Refence articles and suggested reading are:

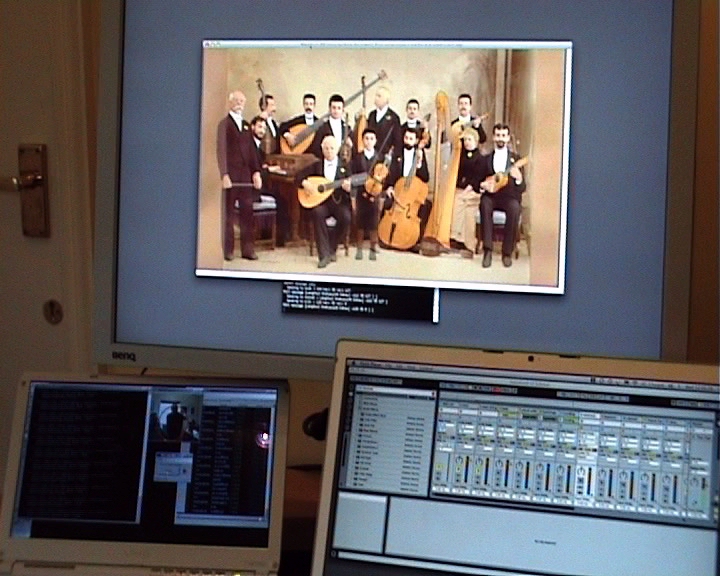

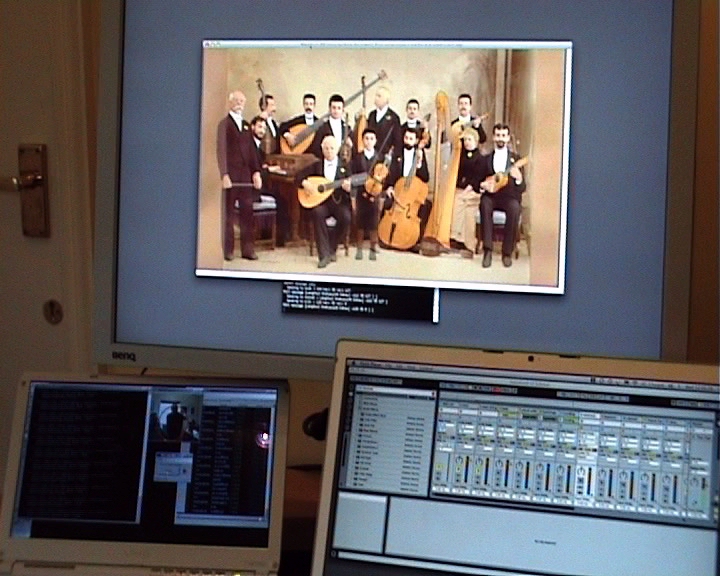

The installation presents an audio-visual story, where simultaneously animated characters, affected by the emotional input, are displayed in a screen playing and reacting to the score.The system creates a composition based on the emotional states detected from users' voices, augmenting the experience by visualizing the music with interactive, animated characters.

simultaneously animated characters, affected by the emotional input, are displayed in a screen playing and reacting to the score.The system creates a composition based on the emotional states detected from users' voices, augmenting the experience by visualizing the music with interactive, animated characters.

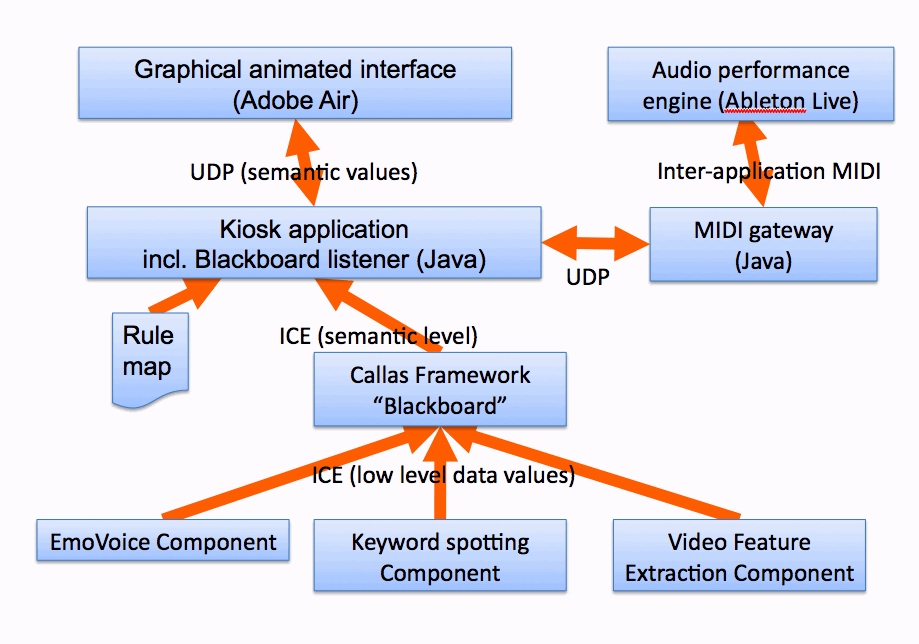

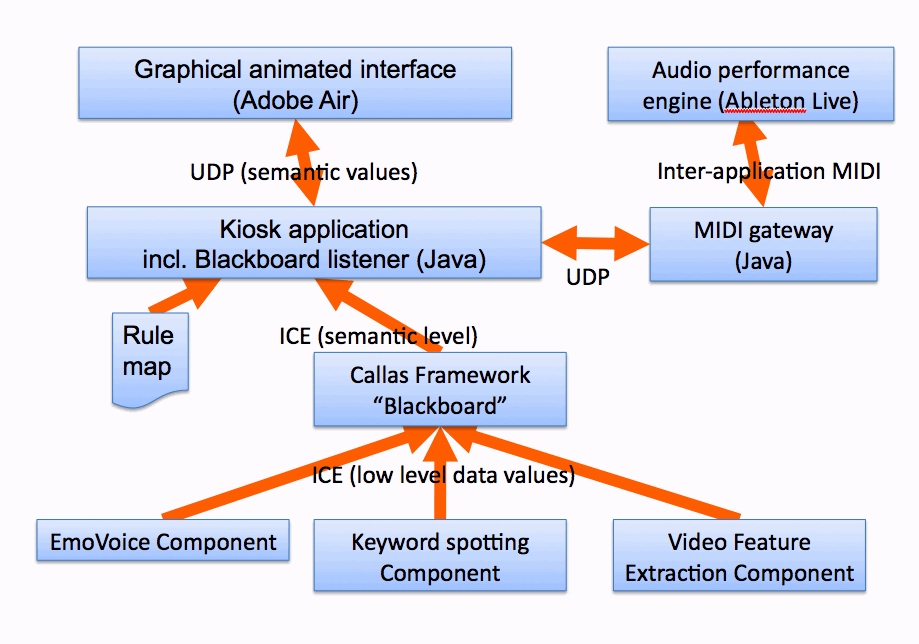

Emotions detected from face and speech are captured and than fused through the CALLAS Framework according to the dimensions of a PAD model, the status representing/estimating the user's emotional state is then rendered in output through music with the Affective Music Synthesis component, which also indicates which of the there different rooms in the kiosk is active at a given point in time, so that relevant instrumentation is employed in the music synthesis process.

Custom-made musical elements can be dynamically added or removed, according to the mood, detected from the fusion of Real-time emotion recognition from speech, Multi Keyword Spotting and Video-Based Gesture Expressivity Features Extraction.

Custom-made musical elements can be dynamically added or removed, according to the mood, detected from the fusion of Real-time emotion recognition from speech, Multi Keyword Spotting and Video-Based Gesture Expressivity Features Extraction.

The full "Concerto Storico" story is supporting the Proof-of-Concept, based on Paola Pacetti's (Director of the Children's Museum of Palazzo Vecchio, Firenze, Italy) concept. The system experiments natural interaction and the capacity to create emotional feedback loops to engage the young through interaction and reaction. Refence articles and suggested reading are:

- Affective Interface Adaptations in the Musickiosk Interactive Entertainment Applications: Abstract

- MusicKiosk: When listeners become composers. An exploration into affective, interactive music: Abstract

The installation presents an audio-visual story, where

simultaneously animated characters, affected by the emotional input, are displayed in a screen playing and reacting to the score.The system creates a composition based on the emotional states detected from users' voices, augmenting the experience by visualizing the music with interactive, animated characters.

simultaneously animated characters, affected by the emotional input, are displayed in a screen playing and reacting to the score.The system creates a composition based on the emotional states detected from users' voices, augmenting the experience by visualizing the music with interactive, animated characters.Emotions detected from face and speech are captured and than fused through the CALLAS Framework according to the dimensions of a PAD model, the status representing/estimating the user's emotional state is then rendered in output through music with the Affective Music Synthesis component, which also indicates which of the there different rooms in the kiosk is active at a given point in time, so that relevant instrumentation is employed in the music synthesis process.

Custom-made musical elements can be dynamically added or removed, according to the mood, detected from the fusion of Real-time emotion recognition from speech, Multi Keyword Spotting and Video-Based Gesture Expressivity Features Extraction.

Custom-made musical elements can be dynamically added or removed, according to the mood, detected from the fusion of Real-time emotion recognition from speech, Multi Keyword Spotting and Video-Based Gesture Expressivity Features Extraction.